you know what i just realized. for cases when you have to base64 encode/decode a lot of time might be spent there more than anything else. something like this could probably help a lot GitHub - mitschabaude/fast-base64: Fastest base64 on the web, with Wasm + SIMD

What do we need to base64 encode/decode here? Or is it just off topic? ![]() However good to know! Thanks!

However good to know! Thanks!

I am trying to encode the 8k jpg into ktx2. I’ve cloned this repo: GitHub - BinomialLLC/basis_universal: Basis Universal GPU Texture Codec

Compiled it but but seems to be stuck of more than half an hour:

Maybe you could give it a try.

The bad thing about WebGPU for me is that I finally started to get the WebGL stuff and now I need to learn WebGPU. Whatever…

I’m looking at about 308 ms for the jpg and 90 ms for the png, with an i7 8700k.

Knowing that this may also be a POT consideration with a logarithmic relationship for file size are good clues as well. Some great information.

Did you try the 8k textures as well? I am really curios about the numbers on your machine.

Did u try Babylon.js/dist/preview release/basisTranscoder at master · BabylonJS/Babylon.js · GitHub ?

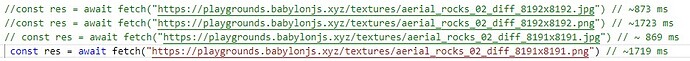

Here’s a screenshot of what my timings are in that same playground for each of the 4 links. POT seemed to actually make no difference for me here.

Yea i think thats the case for those ridiculously large textures. Did u try the updated pg i linked ? I think its #16

I didn’t yet but I am looking at it just right now. I did some tests with ktx2 textures though. EDIT3: it seems to be the same stuff ![]()

EDIT: was it addressed to me? ![]()

EDIT2: what?

![]()

I suppose this is not the real error.

Il take a look when i get home

Nice, that confirms power of 2 is significant for reasonable size textures, 300ms to 180ms, down to 49ms . that pixlr online image editor is super convenient, glad i found it. i put it on my bookmarks bar.

somewhat unrelated, but i also found this open source catalog of super high quality materials from amd . .MaterialX Library

ok put me to work, what did u want me to look at? btw check this out, try running it locally. preloads a bunch of textures and sounds using a service worker, with a gui to delete the cache for testing.

.GitHub - jeremy-coleman/vite-sw-prefetch-cacheness. I have an idea, but we need a way to create file manifests for github repos. Any clever ideas that won’t run into a rate limit, require any auth tokens, or require downloading a repo?

I was already sleeping but had nightmares… The matrix is playing with me. So, the transcoder you’ve linked here is not working for me. Any ideas?

Sir yer sir! ![]()

EDIT: Cool stuff!!

hmm, i think the problem is probably needing to initialize the module first, but, i actually think that is an outdated version. There has been some bugs in the draco compression libs, but idk much about that. Or the relationship between draco, gltf, and basis, but they were talking about it here.

.Consider adding gzip or brotli compression for Draco and Basis WASM binaries

labris linked this one , probably better than anything else.

Sorry, I don’t get this. Rate limit, tokens, repo downloading? For what reason?

to get a list of files for a service worker to prefetch

Unfortunatelly still:

![]()

The design is awesome! Now digging the code.