So it’s taken me a little while to figure out a good repro in PG for the transparency issue I mentioned in my first post, mainly because it took me a while to notice that my PG was actually being wrong in the same way as the screenshot I posted above.

To recap:

When loading GLTFs using the transmission extension, they screw up the colours of whatever’s behind them.

And here’s a good playground to test this on:

https://playground.babylonjs.com/#WGZLGJ#2910

This actually has nothing to do with NME materials, so it seems like it’s a general issue?

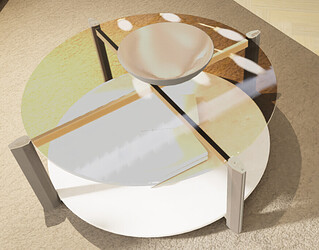

When you load up the PG you’ll see this:

There are two chrome spheres, one glass sphere and a glass block in front of them.

The glass sphere is set up using a standard process of creating a PBRMaterial, making it transparent, setting index of refraction and roughness. This seems to be a cheaper PBR-lite way of doing glass that Babylon uses, where it is rendered with alpha blending instead of the multi-pass approach for ‘proper’ PBR glass, so it can have some noticeable artefacts - because its reflections are alpha-blended rather than additive, they behave incorrectly when the background behind them is brighter than the reflection itself:

Which is fine, I don’t think there’s a better way of rendering glass as just an alpha blended material.

However, when we import a GLTF with the transmission extension like the glass box, Babylon sets up that material in a slightly cleverer way, which I don’t fully know how to replicate in code. It seems like it actually gets rendered as an opaque object that resolves and samples the opaqueSceneTexture containing the objects that were rendered to the scene before it. This allows it to draw physically correct glass that can both attenuate and add to the colour behind it - as well as being able to sample opaqueSceneTexture at lower mips to fake frosted glass. Very cool.

But, it seems like there’s a gamma issue where opaqueSceneTexture gets sampled by the glass box material. When you load the scene by default, it looks like the box is just kind of dark, but actually it should be fully transmissive just like the glass sphere is, its tint colour is full white, so we should really be seeing only its reflection.

In fact the behaviour is obviously wrong when you adjust exposure:

The glass box gets disproportionately darker when exposure is decreased, but when exposure is increased it actually becomes luminous and appears to add light to the scene.